|

|

FAQ (Frequently Asked Questions)

Category:

CDS 101/110 Fall 2004

Identifiers: H0 H1 H2 H3 H4 H5 H6 H7 H8 L0.0 L1.1 L1.2 L2.1 L2.2 L2.3 L3.1 L3.2 L4.1 L4.2 L5.1 L5.2 L6.1 L7.1 L9.1

- Can a TA enter a test FAQ?

- Can a FAQ answer have a LaTeX equation embedded?

- When is the recitation for CDS 110a?

- What is "b" on the speed control slide?

- How do you *set* gain on the speed control slide?

- What is the definition of a system? When is something a system and when is it not?

- Aren't most continuous, even mildly complex processes feedback systems?

- How is "open loop" a feedback mechanism? I don't get where the feedback happens?

- What is the reference for the biomolecular regulatory network diagram (slide 7)

- Can a control system include a human operator as a component?

- What classes are available for students who want to take CS/EE/ME 75 and don't want to work on the DARPA Grand Challenge?

- What is the definition of "overshoot" for question 5?

- Is it OK to use non-physical systems (like behavioral systems) for our examples on problem #1?

- Do we measure settling time on #2 from time 0, or from when the step starts?

- Slide 3 - How does the signal know whether to go to output or controller?

- Why can't we control voltage/resistance digitally?

- Will we also learn how to analyze system performance (i.e. robustness, errors, etc)?

- Please clasify Prob#1 of Hw#1. Uncertainty of what? Is this uncertainty introduced by feedback mechanism?

- What recitation should I attend if I am an ACM major?

- What if only one section will be useful to me? (e.g. Biological processes for Bio grad)

- Does each section have different exams, etc?

- Is there any disadvantage to attending only one section?

- How much of the section is taught/run by the instructor? TA?

- What are the units of gain?

- When I press the button to start the hw1cruise.mdl it just beeps at me.

- How do I access the data in the ballbeam structure, saved in the MATLAB workspace?

- Is there a better way to change inputs to a system in Matlab (gains) and run the examples in an automated fashion?

- For the model on slide 13, which line is the foxes, and which is the rabbits?

- Why isn't there a term for the rabbit death rate besides being killed by the foxes?

- In the predator-prey example, where is the fox birth rate term?

- How do you set the parameters of a Simulink simulation?

- I don't understand why you don't use the rabbit death rate or the fox birth rate

- Example 1: The point moving back and forward, what does it map to on the car, and what is the dampening?

- In the mass-spring system modelling the car, one of the springs is fixed to a wall. How does that model the car when that "spring" on the car is connected to the chassis?

- What is the sentence fragment on the fourth bullet of problem 4 supposed to say?

- My frequency response for problem 4 isn't very interesting - the amplitude is constant across the given range.

- There is a mixing of units in problem 2, since the reference is in mph and the model is in SI. Do we need to put in a conversion factor somewhere?

- On problem 3, part b, can we assume a11 is not equal to a22?

- Lastly, is rise time defined as the amount of time for which the signal reaches 95% of its final value, REGARDLESS if it has overshoot and oscillating effects which may bring it back below 95% of the final value ?

- For number 1, when is a block dynamic?

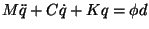

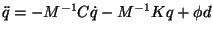

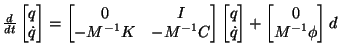

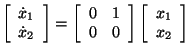

- How did you change the first matrix equation to the second in lecture?

- What initial conditions should we use for the velocity and engine in problem 2?

- What exactly is transient and steady state response?

- Can you provide references for differential equations and linear algebra?

- Simulink: I have constants such as m, g, angle, etc. Is there a way to associate them to parameters that I can change whenever I want?

- For question 2d, what is the rise time for the hill?

- I saw both ode45(@springmass,....) and ode45('springmass',....), what are the difference?

- How much was each problem worth?

- For problem 4 parts b and c, do we need to solve the equations analytically?

- What is a level set?

- Who do we talk to if we have questions about graded homework?

- How do we know how to "twist" our candidate Lyapunov function?

- Are there lecture notes for today's lecture?

- If every equilibrium point can be transformed to the origin and the operated on w/ Lyapunov, how can a system have both stable and unstable equilibrium points?

- Is problem 3b supposed to read m/s?

- Are the percentages in the definition of rise time, overshoot measured from the final value, or the size of the input change?

- For 4c, how do we solve the equation with no given form for v(t)?

- Can I call Richard Murray "Dick" Murray?

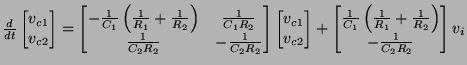

- What is the correct equation for the circuit on slide 11?

- Please, sir, may I have another Lyapunov function example?

- How can we tell from the phase plots if the system is oscillating?

- On slide 8, real eigenvalues & complex eigenvalues look the same, both appear to be asymptotically stable. Why?

- What is an example of a system with Re(\lambda)=0 that is not stable? What if Im(\lambda) is not zero?

- How do I determine how many Jordan blocks a matrix will have for a particular eigenvalue?

- Should there be a C in front of the second term of equation 4.10 in textbook?

- On page 100 of textbook, how do we get equation 4.13, and should the line immediately above this equation read "input is a unit step?"

- How do we define the derivative of a step function as used in the next line? (If it were differentiable at t=0, it would also be continuous!?). how do we interpret the delta "function" in equation 4.14?

- Linmod is giving me all sorts of errors, and I'm getting weird things for 2a. What's wrong?

- If the reachability matrix is not full rank, isn't it possible for the system to be able to reach many X's, just not all of them?

- What do you mean by g(M+m) ~= 1 on slide 7? The units don't work out!

- How do you choose the eigenvalues when using "place" in MATLAB?

- In lecture you said we want to place the eigenvalues of (A+BK), but MATLAB's "place" places eigenvalues of A-BK. What's the deal?

- How come you can always find u(t) such that the integrals come out to be what is needed to drive the state where you want it to go if the reachability condition holds?

- What is a "pole"?

- Prof. Mabuchi mentioned that if the observability matrix is full rank, we may still have to wait some time to determine a derivative. Is this true?

- Can we see that Engine Control example again?

- What on earth is a Laplace domain?

- What was the funny "D with a line through it" symbol on slide 1, and what did the "]" on slide 3 and 4 mean?

- For question 2b, what value of Kp should we use?

- Why does the book say sin(wt)+icos(wt)?

- What is the definition of bandwidth?

- Where can I find definitions for gain margin, phase margin, and stability margin?

- How do I calculate "steady state error"?

- For problem 1, do we plot bode and nyquist plots for the open-loop or closed-loop system?

- How does overshoot relate to phase margin?

- Is it OK for a system to have infinite gain margin?

- Isn't the sensitivity function the same as the function used for steady state error? How are they related?

- For 2a, what do you mean by "less negative phase"?

- How does settling time relate to the root locus plot?

- What's the difference between calculating the tracking error with mag(L) vs. the sensitivity function?

- What do you mean by "If the integral is surjective (as a linear operator), then we can find an input to achieve any desired state"?

-

Can a TA enter a test FAQ?

Submitted by: waydo

Submitted on: September 15, 2004

Identifier:

L0.0

Yep - everything works okey-dokey. For instructions on how to add a FAQ entry, see the information here (instructors and TAs only).

[Back to Top]

-

Can a FAQ answer have a LaTeX equation embedded?

Submitted by: waydo

Submitted on: September 17, 2004

Identifier:

H0

I sure hope so. Here goes nothing:

Yee-ha, it worked!

[Back to Top]

-

When is the recitation for CDS 110a?

Submitted by: waydo

Submitted on: September 27, 2004

Identifier:

L1.1

Stay tuned! We will be handing out a signup sheet on Wednesday and should have recitation assignments early next week.

[Back to Top]

-

What is "b" on the speed control slide?

Submitted by: haomiao

Submitted on: September 27, 2004

Identifier:

L1.1

b is the coefficient of drag. The drag force on the vehicle due to atmospheric drag, friction, and everything else is lumped together and abstracted as a linear force directly proportional to the vehicle speed (faster you go, harder you get pushed back), so the -bv term in the force equation is the retarding force on the vehicle.

[Back to Top]

-

How do you *set* gain on the speed control slide?

Submitted by: ddomitilla

Submitted on: September 27, 2004

Identifier:

L1.1

The variable "k" is usually referred to as the gain of the controller, and it establishes how strong the control action is. Usually, k should be large compared to b and u_hill in order to have small regime error in presence of disturbances and small sensitivity to parameter variations ( disturbance rejection and robustness). In general, k should not be too high as it may create overshoot and thus affect performance. The example proposed has a first order repsonse, so overshoot is not possible and thus in theory the best k is the highest you can provide.

Gain setting is actually an important problem when you design a real control

feedback system. Generally speaking, gain is an artificial amplifier to make

certain features of the information prominent. For example, when you want to

track a desired speed, then the difference between the desired speed and

actual speed is the feature that you want your system cares. Then in this

case, the gain will make this feature more 'obvious' to the system, ie. the

system can react more agily to the difference of the two speeds. But there

is no free dinner in this world. As we increase the gain higher and higher,

the reaction ability of the system increases too much such that it

over-reacts to a even tiny change of signal, thus making the whole system

not efficient in terms of too much overshoot or oscillation or even becoming

unstable. We will learn how to define a system to be stable or unstable in

the coming lectures, really important concepts in control. Also we will

learn the topic of how to set 'smart' gains in real system design. In

summary, diverse requirements of the system will shrink your searching space

of gain greatly.

[Back to Top]

-

What is the definition of a system? When is something a system and when is it not?

Submitted by: asa

Submitted on: September 27, 2004

Identifier:

L1.1

Very generally, a system is something that can be placed in a physical or metaphorical "box". Slightly more concretely, we might consider a system to be a single object or interacting collection of objects with some number and type of inputs, and some number and type of outputs. We will be most interested in dynamical systems (those which change over time or whose outputs change over time).

Whether something is a system or not depends on what interests you about it. From a dynamical perspective, a fixed, stationary metal block might not be very interesting, with no obvious inputs or outputs, and therefore wouldn't make a good system. However, if we're interested in thermal or electrical properties, it might be an interesting system.

[Back to Top]

-

Aren't most continuous, even mildly complex processes feedback systems?

Submitted by: stephenc

Submitted on: September 27, 2004

Identifier:

L1.1

Short answer: Yes.

Many processes, complex or otherwise, incorporate some form of feedback. The fundamental requirement for feedback is that the system in consideration is somehow modulated, controlled, or changed by the output it produces.

From an engineering perspective, usually you are looking to control a given system. This is accomplished by measuring the output, performing some kind of decision algorighm based on the output and design specs, and finally implementing an action that influences the input of the system.

[Back to Top]

-

How is "open loop" a feedback mechanism? I don't get where the feedback happens?

Submitted by: aotang

Submitted on: September 27, 2004

Identifier:

L1.1

Yes, you are right. When we say "open loop", that means there is no feedback. I guess you are confused by the figure 1.1 in chapter 1. Authors really want to show you the most general form of a control system there. It can be a feedback control system, like the one shown in subfigure (a), or a non-feedback control system, like the one shown in subfigure (b), an open loop, where you can only use system 1 to control system 2 but not vice versa.

A little bit side knowledge here. As you will learn later, when you try to design or analysize a closed loop system, the corresponding open loop system is very likely to be the target to study first.

[Back to Top]

-

What is the reference for the biomolecular regulatory network diagram (slide 7)

Submitted by: murray

Submitted on: September 27, 2004

Identifier:

L1.1

This is taken from reference 11 in the course textbook:

D. Hanahan and R.A. Weinberg.

The hallmarks of cancer.

Cell, 100:57--70, 2000.

[Back to Top]

-

Can a control system include a human operator as a component?

Submitted by: waydo

Submitted on: September 28, 2004

Identifier:

L1.1

Absolutely! Any time the output of one system influences a second, which in turn influences the first, we have a feedback system. If the purpose of the second system and the feedback connection is to alter the dynamics of the first, we have a control system. If one of the systems is a human operator, we have a "human-in-the-loop" system. A very early example of this is the Wright Flyer, described in the reading. The aircraft itself was unstable, and the pilot was a critical component of stabilizing the system and making controlled flight possible.

The difficulty of analyzing human-in-the-loop system lies in modeling the behavior of the operator, which in general is much more complex (and non-smooth) than an engineered system. This is a very active area of current research.

[Back to Top]

-

What classes are available for students who want to take CS/EE/ME 75 and don't want to work on the DARPA Grand Challenge?

Submitted by: murray

Submitted on: September 29, 2004

Identifier:

L1.2

There are several options available for students interested in takinga course on multi-disciplinary engineering but who are not interested in participated in the DARPA Grand Challenge.

Option 1: Ae/CDS 125abc - this class is a team-based space systems design class that focuses on similar issues to CS/EE/ME 75, but without the hands-on component. The prerequisites are basically the same as CDS 101/110 (Ma 1/2, Ph 1/2). This course is also open to graduate students.

Option 2: wait until a later year - while this year's project for CS/EE/ME 75 is the DARPA Grand Challenge, future projects are likely to vary from year to year. The project for next year has not been determined, but some possibilities including designing, building and launching an autonomous satellite, participating in a search and rescue competition, or building a powerless flight system (glider) that can fly across the Pacific Ocean.

Option 3: take an independent project course - this will miss the team-based aspects of the course, but some of the same educational objectives could be obtained by looking at a small multi-disciplinary project or interacting with an ongoing research project in an appropriate lab. Students interested in pursuing this path should discuss some of the courses and projects available with their faculty advisor.

[Back to Top]

-

What is the definition of "overshoot" for question 5?

Submitted by: asa

Submitted on: September 29, 2004

Identifier:

H1

The homework assignment itself defines overshoot in context as "the maximum amount by which the ball goes past the desired resting point, expressed as a percentage of the commanded position." However, it is unclear whether this might mean the first peak, the first valley (which may be larger in magnitude than any of the peaks) or simply the highest peak, regardless of whether it's the first.

Upon further consultation with a later chapter of the textbook, it gets a little more clear: In Chapter 3, overshoot is defined as the percentage by which the value initially rises above the final value. The book then continues to say that in cases where the first is not the largest, the term is ambiguous. For linear systems, this initial rise will always be the largest deviation from the desired value, so there's no ambiguity. The example in problem 5 is nonlinear, so we enter the gray zone.

From a practical standpoint as you do your homework, please consider the TAs. The best policy when there is ambiguity about definitions is to state clearly what definition you are using, and be consistent in that definition.

[Back to Top]

-

Is it OK to use non-physical systems (like behavioral systems) for our examples on problem #1?

Submitted by: waydo

Submitted on: September 29, 2004

Identifier:

H1

This is just great. You need to be sure you can address all aspects of the question with the systems you choose, but feel free to be inventive.

[Back to Top]

-

Do we measure settling time on #2 from time 0, or from when the step starts?

Submitted by: waydo

Submitted on: September 29, 2004

Identifier:

H1

You should measure setting time from when the input starts. For example, if the step time is at 13 seconds and you find the system has settled after 42 seconds, the settling time would be 29 seconds.

[Back to Top]

-

Slide 3 - How does the signal know whether to go to output or controller?

Submitted by: waydo

Submitted on: September 29, 2004

Identifier:

L1.2

The signal actually goes to both the output and the controller. Block diagrams simply show the information flow schematically, so we can split and reroute signals arbitrarily on the diagram, even if the information is actually sent along different physical paths.

[Back to Top]

-

Why can't we control voltage/resistance digitally?

Submitted by: waydo

Submitted on: September 29, 2004

Identifier:

L1.2

Sometimes we can. At a very low level, however, virtually all physical devices are controlled by analog signals. It may be, for example, that we use a motor with a digital input, but then at some lower level within the motor there is probably a digital motor controller with an output that is then run through a D/A converter to produce an analog input to the motor. The goals of a particular control task will dictate whether we need to model this level of detail or whether we can view the motor as a purely digital device.

[Back to Top]

-

Will we also learn how to analyze system performance (i.e. robustness, errors, etc)?

Submitted by: waydo

Submitted on: September 29, 2004

Identifier:

L1.2

Definitely. We will look at performance in terms of design specifications in some detail, and cover the basic ideas behind robustness. Later courses in control present extremely powerful tools for evaluating the robustness of a system to uncertainty in terms of both stability and performance.

[Back to Top]

-

Please clasify Prob#1 of Hw#1. Uncertainty of what? Is this uncertainty introduced by feedback mechanism?

Submitted by: jianghao

Submitted on: September 29, 2004

Identifier:

L1.2

In nature, the real dynamics of a certain system usually evolve with time and are affected by all kinds of noise. Sometimes to simplify our analysis or achieve mathematical beauty, we just assume a static model for the system. Then the difference between the real dynamic model and the assumed static model accounts for the uncertainty in the open-loop system. Furthermore, even when the system does have static model, whether or not we use black box methods or model-based methods, the system models we estimate will have more or less error from the real ones. For example, in many cases, to simplify the analysis and design process, we substitute a much simpler model to the real one. Due to the existence of the above phenomenon, the designed control system must have some tolerance to this 'error', ie. the system should have good performance under not only the estimated model or system parameter, but also under the real system model or parameters (although many times unknown to some extent). The introduction of feedback is not to generate this uncertainty to the system, but instead, is to suppress the bad effect of original uncertainty inside the naked open system. In another word, close-loop system can monitor and regulate its behavior more smartly by the help of embeded controller.

[Back to Top]

-

What recitation should I attend if I am an ACM major?

Submitted by: waydo

Submitted on: September 30, 2004

Identifier:

L1.2

You should pick a recitation section based on what sounds interesting to you. Since your option doesn't push you strongly in one direction, you may want to try a couple of different recitations to see what interests you or what TA works best for you.

[Back to Top]

-

What if only one section will be useful to me? (e.g. Biological processes for Bio grad)

Submitted by: waydo

Submitted on: September 30, 2004

Identifier:

L1.2

This should be clear to us from the option you indicate on the scheduling form, but you can also write a note on the sheet to make it very clear. If you end up with a problem with your scheduled section let us know and we will try to accomodate you.

[Back to Top]

-

Does each section have different exams, etc?

Submitted by: waydo

Submitted on: September 30, 2004

Identifier:

L1.2

No, all sections will have the same exams. One of the great strengths of control theory is that the same mathematics can be used to describe a huge variety of problems just by changing words in the verbal problem description. The different recitation sections will cover exactly the same topics, just drawing examples from different application areas.

Some homeworks will include a set of problems from different application areas that you may choose from based on your interests, so to some extent the homework will be customized to your recitation.

[Back to Top]

-

Is there any disadvantage to attending only one section?

Submitted by: waydo

Submitted on: September 30, 2004

Identifier:

L1.2

No. All sections will cover the same material, so you should get the information you need from any of them. You are more than welcome to try attending different sections if you like, but it isn't necessary to succeed in the course.

[Back to Top]

-

How much of the section is taught/run by the instructor? TA?

Submitted by: waydo

Submitted on: September 30, 2004

Identifier:

L1.2

The recitation sections will be entirely taught by the TA's. We meet weekly with the instructor to make sure the right material is covered, but the TA's will use their domain-specific expertise to create examples for their recitation sessions.

[Back to Top]

-

What are the units of gain?

Submitted by: waydo

Submitted on: October 1, 2004

Identifier:

H1

For example, you need to plot gain on a graph - what units should you use?

The units of gain vary depending on application. For example, if you have a servo control mechanism, you may have gain in units of volts/degree, i.e. the number of volts applied to the motor per degree of error. In other cases gain is a ratio of magnitudes and is truly unitless. In general we don't worry about specifying the units on plots, etc.

[Back to Top]

-

When I press the button to start the hw1cruise.mdl it just beeps at me.

Submitted by: waydo

Submitted on: October 1, 2004

Identifier:

H1

Simulink beeps when it's finished with a simulation, which is probably pretty fast for this model. The model doesn't give you any visible output, but the "To Workspace" blocks log the data to the MATLAB workspace. If you type "who" in the MATLAB command window after running the model you will see the logged variables. At this point you can, for example, "plot(time,vel)" to get a plot of velocity vs. time for the simulation.

[Back to Top]

-

How do I access the data in the ballbeam structure, saved in the MATLAB workspace?

Submitted by: asa

Submitted on: October 1, 2004

Identifier:

H1

To burrow into the structure, use a "dot" syntax. That is, if you just type "ballbeam" in the workspace, it tells you that the structure is made up of "time", "signals" and a name. "ballbeam.signals" is made up of "values" and a dimension (4). If you look at the Simulink model, you can see that the first of the "values" is what's going to the scope; the other three are trashed. So, the data you want is in the first column of the vector "ballbeam.signals.values".

[Back to Top]

-

Is there a better way to change inputs to a system in Matlab (gains) and run the examples in an automated fashion?

Submitted by: jianghao

Submitted on: October 4, 2004

Identifier:

L2.1

Sure you can. By using the Bode plot, you can get the result of system response under different input frequency automatically(here assume the inputs can be decomposed into sine functions with different frequencies). To get Bode plot, you only need to tell Matlab the Transfer Function of the system(will learn very soon), then everythins is left for Matlab to run. Matlab can also generate output for other kinds of input pattern, eg. step function.

[Back to Top]

-

For the model on slide 13, which line is the foxes, and which is the rabbits?

Submitted by: asa

Submitted on: October 4, 2004

Identifier:

L2.1

The lower-amplitude, blue line is the rabbits; the higher-amplitude green line is the foxes. You can tell because the rabbit line peaks first in each cycle, which makes sense since it's the prey.

[Back to Top]

-

Why isn't there a term for the rabbit death rate besides being killed by the foxes?

Submitted by: asa

Submitted on: October 4, 2004

Identifier:

L2.1

The rabbit birth rate is really the "net" birth rate -- it incorporates the birth rate as well as the death rate if there were no foxes. Similarly for the foxes; the death rate is the "net" death rate.

[Back to Top]

-

In the predator-prey example, where is the fox birth rate term?

Submitted by: waydo

Submitted on: October 4, 2004

Identifier:

L2.1

In the model from class it is assumed that the fox birth rate depends only on the supply of rabbits for food, so it is included as the interaction term.

[Back to Top]

-

How do you set the parameters of a Simulink simulation?

Submitted by: waydo

Submitted on: October 4, 2004

Identifier:

H1

This depends on what you mean by "parameters." There is a menu found under the "Simulation" menu called "Simulation parameters..." that lets you set things like the simulation duration as well as control some of the details of the integrator.

If you mean things like gains and other block parameters, you can access them by double-clicking on the block you want to change.

[Back to Top]

-

I don't understand why you don't use the rabbit death rate or the fox birth rate

Submitted by: aotang

Submitted on: October 4, 2004

Identifier:

L2.1

For this question, one can refer to the answer provided by asa for last question. Another possible understanding, which is adopted in the book, is to assume rabbits never die other than being eaten. This is ok reasonable especially for the strong interaction case. Also note the the last term of the second equation can be viewed as "somewhat" model for birth rate for foxes, which is affected by the number of prey they can have.

As admitted in the book,"This simple model makes many simplifying assumptions....--but it often is sufficient to answer simple questions about the system." It is definitely good to think carefully what are the underlying assumptions models make. However, it does not mean the most complete model is the best because they may be too complicate to give out any useful insights. Hence one should target at the right level of simplification which suits his/her needs best.

"All models are wrong(inaccurate), some are useful".

[Back to Top]

-

Example 1: The point moving back and forward, what does it map to on the car, and what is the dampening?

Submitted by: haomiao

Submitted on: October 4, 2004

Identifier:

L2.1

The point moving back and forth corresponds to the bottom of the tire. The input forcing is the ground, so when the ground goes up and down it also forces the tire up and down. Obviously this is a very simplified model which wouldn't take into account gravity. The damping would represent the fluid in the suspension shock absorber.

[Back to Top]

-

In the mass-spring system modelling the car, one of the springs is fixed to a wall. How does that model the car when that "spring" on the car is connected to the chassis?

Submitted by: waydo

Submitted on: October 4, 2004

Identifier:

L2.1

If the weight of the car is very large compared to the effects of the driving force on the suspension, then modelling the car as a fixed wall with springs is not a bad approximation. However if it is not the case, the boundary conditions you would have in order to model a suspension system in a car may be diffrent. You may leave out the wall and have the weight of the car supported by a spring.

[Back to Top]

-

What is the sentence fragment on the fourth bullet of problem 4 supposed to say?

Submitted by: waydo

Submitted on: October 5, 2004

Identifier:

H2

Nothing - it was left over from an earlier draft of the problem. The homework on the web site has been updated.

[Back to Top]

-

My frequency response for problem 4 isn't very interesting - the amplitude is constant across the given range.

Submitted by: waydo

Submitted on: October 5, 2004

Identifier:

H2

There was an error in the frequency range you were given. You should do the frequency response for 1 to 100 rad/s. The homework on the web site has been updated.

[Back to Top]

-

There is a mixing of units in problem 2, since the reference is in mph and the model is in SI. Do we need to put in a conversion factor somewhere?

Submitted by: haomiao

Submitted on: October 5, 2004

Identifier:

H2

You DO in fact need to do a conversion gain from SI to english/english to SI. You just need to convert the reference input from mph to m/s.

[Back to Top]

-

On problem 3, part b, can we assume a11 is not equal to a22?

Submitted by: waydo

Submitted on: October 10, 2004

Identifier:

H2

Yes. If a11 and a22 are equal the solution becomes somewhat more complicated (although the answer is the same).

[Back to Top]

-

Lastly, is rise time defined as the amount of time for which the signal reaches 95% of its final value, REGARDLESS if it has overshoot and oscillating effects which may bring it back below 95% of the final value ?

Submitted by: haomiao

Submitted on: October 5, 2004

Identifier:

H2

Rise time is ALWAYS the time it takes the system to go to 95% for the

FIRST time. It is a measure of how fast the system reacts to a

change in reference input, so you always want to know how quickly the

system initially gets near to the reference, even if it

overshoots/undershoots away.

[Back to Top]

-

For number 1, when is a block dynamic?

Submitted by: haomiao

Submitted on: October 5, 2004

Identifier:

H2

Whether or not a system is dynamic stems from the lack or presence of

internal states. In the case of a static map, the input is mapped

directly to the output, so in terms of A,B,C,D form the A, B, and C

matrices are 0-matrices, with y = Du being the only equation needed to

describe the system. Thus there is nothing dynamic or changing within the

system/block.

[Back to Top]

-

How did you change the first matrix equation to the second in lecture?

Submitted by: waydo

Submitted on: October 6, 2004

Identifier:

L2.2

We start with the first equation:

Now solve for the second derivative of q to obtain

This along with the simple equation

gives us the second matrix equation:

[Back to Top]

-

What initial conditions should we use for the velocity and engine in problem 2?

Submitted by: haomiao

Submitted on: October 8, 2004

Identifier:

H2

For parts a & b just use zero since you're getting open loop response. It doesn't actually matter too much what initial values are used for parts c & d. Since the system is closed-loop, the controller will eventually settle system to the appropriate configuration for whatever the reference speed is. v0 can be whatever your initial reference is, and make a guess at what the inital engine force should be. Just make sure that you set the hill and step inputs to occur after any initial oscillations have damped out.

From Asa: Regarding the torque for parts c and d, you can pick an initial speed, and then use equations (1) and (2) to solve for the steady state (all derivatives = 0) torque needed to maintain that speed.

[Back to Top]

-

What exactly is transient and steady state response?

Submitted by: haomiao

Submitted on: October 8, 2004

Identifier:

H2

The transient response is the inital fluctuations a system undergoes when external forcing first occurs, and the steady state response is the behavior the system settles into after a long period of time has passed. Basically, the transient response is the natural response of the system to an initial condition and corresponds to the homogenous solution to whatever differential equation defines the system, and usually dies down to 0. The steady state response corresponds to the nonhomogenous solution caused by the forcing, and does not die down as t -> infinity.

[Back to Top]

-

Can you provide references for differential equations and linear algebra?

Submitted by: waydo

Submitted on: October 8, 2004

Identifier:

L2.3

Linear Algebra:

- Strang, "Linear Algebra and its Applications"

Differential Equations:

- Apostol, "Calculus vol II", Chapters 1-7

- Perko, "Differential Equations and Dynamical Systems" - this actually a very advanced book, but chapter 1 covers and expands on the material we discussed in class and is reasonably straightforward.

[Back to Top]

-

Simulink: I have constants such as m, g, angle, etc. Is there a way to associate them to parameters that I can change whenever I want?

Submitted by: asa

Submitted on: October 8, 2004

Identifier:

H2

You should be able to use variable names for parameters in the simulink blocks. For example, if you want to have a variable for the initial velocity of the car, you can put "k" in the "initial conditions" section of that block's parameter window, and then set the value of k (eg. "k=55;") in the workspace.

[Back to Top]

-

For question 2d, what is the rise time for the hill?

Submitted by: haomiao

Submitted on: October 9, 2004

Identifier:

H2

There isn't one. The hill is a disturbance, not a reference input. Rise time is a measure of how fast a system responds to a reference input, so it doesn't really apply in this case. Yes, this is a silly question, and yes, we will be taking it out.

[Back to Top]

-

I saw both ode45(@springmass,....) and ode45('springmass',....), what are the difference?

Submitted by: aotang

Submitted on: October 11, 2004

Identifier:

L3.1

Both 'springmass' and @springmass refer to the function springmass. Both should work. They don't have difference here.

[Back to Top]

-

How much was each problem worth?

Submitted by: waydo

Submitted on: October 12, 2004

Identifier:

H1

Each problem was worth 10 points, which will generally be the case. Occasionally we may decide to assign 20 points to a particularly hard problem. Regardless of the total number of points, all assignments will be weighted equally when calculating grades.

[Back to Top]

-

For problem 4 parts b and c, do we need to solve the equations analytically?

Submitted by: waydo

Submitted on: October 12, 2004

Identifier:

H3

Yes. You should solve them analytically (leaving variables as variables), then plug in the given parameters for the purposes of plotting. For (a), you can plug in the initial conditions from the start, but leave zeta and omega as variables. It's OK to take A = 1 for the forcing, since what you're plotting in the end is the ratio of the amplitude of the motion to the amplitude of the driving.

[Back to Top]

-

What is a level set?

Submitted by: aotang

Submitted on: October 13, 2004

Identifier:

L3.2

The level set of a differentiable function f: R^n->R corresponding to a real value c is the set of points

{(x1,...,xn) in R^n : f(x1,...xn)=c}

If n = 2, the level set is a plane curve known as a level curve. If n = 3, the level set is a surface known as a level surface.

By the way, I copy above words from mathworld

[Back to Top]

-

Who do we talk to if we have questions about graded homework?

Submitted by: waydo

Submitted on: October 13, 2004

Identifier:

L3.2

, H1

You should send an email to the ta list (cds101-tas@cds), so the TA who graded the problem you have a question on can respond.

[Back to Top]

-

How do we know how to "twist" our candidate Lyapunov function?

Submitted by: waydo

Submitted on: October 13, 2004

Identifier:

L3.2

This is the difficulty with proving stability with Lyapunov functions - although a Lyapunov function is guaranteed to exist for a stable equilibrium point, finding it may be hard. The energy plus a small "twist" method works well for mechanical systems, and just requires you to add a small cross term (i.e. a term on x*xdot). The best way to find this is to put in such a term with a parameter out front (i.e. alpha*x*xdot), then see if you can adjust the parameter to make Vdot negative definite. The TAs will be doing an example of this in recitation this week.

[Back to Top]

-

Are there lecture notes for today's lecture?

Submitted by: waydo

Submitted on: October 13, 2004

Identifier:

L3.2

Sorry, there are no lecture notes for this lecture. However, all of the material is in the book (AM04, Chapter 3) on the website.

[Back to Top]

-

If every equilibrium point can be transformed to the origin and the operated on w/ Lyapunov, how can a system have both stable and unstable equilibrium points?

Submitted by: haomiao

Submitted on: October 13, 2004

Identifier:

L3.2

The reason that you want to transform points to the origin is to that you can look at local stability and ignore the higher order (O(3) and above) terms. The actual location of the equilibrium terms still exists in the functions you use to do the analysis, however. For example:

Say you have xdot = f(x), with eq. pt. Xe. Then you do the transform:

z = x - Xe, so zdot = xdot = f(x), but x = z + Xe, so

f(x) = f(z + Xe), so the information about the actual location of the eq. point is still in the functions, and when you do the stability analysis for f(z+Xe) that'll show the stability of the actual equilibrium point you're looking at.

[Back to Top]

-

Is problem 3b supposed to read m/s?

Submitted by: haomiao

Submitted on: October 14, 2004

Identifier:

H3

Only if you're working on the set in a godless red commie country that doesn't use proper English units like hogsheads, slugs, and furlongs. For the rest of us, we'll stick to our good ol' MPH to be consistent with 3a.

[Back to Top]

-

Are the percentages in the definition of rise time, overshoot measured from the final value, or the size of the input change?

Submitted by: asa

Submitted on: October 17, 2004

Identifier:

L3.1

They are measured from the size of the input change. That is, for a system whose target has changed from 10 to 20, the rise time is the time to go from 10.5 to 19.5. If it overshoots to 21, that's 10% overshoot.

[Back to Top]

-

For 4c, how do we solve the equation with no given form for v(t)?

Submitted by: asa

Submitted on: October 18, 2004

Identifier:

H3

When we say to "compute the frequency response of" a system, what we mean is that you should put in a sinusoidal input (such as v(t) = A sin (wt)) and see how the amplitude and phase depend on the frequency of the driving.

[Back to Top]

-

Can I call Richard Murray "Dick" Murray?

Submitted by: waydo

Submitted on: October 18, 2004

Identifier:

L4.1

You certainly can, although I wouldn't recommend it.

[Back to Top]

-

What is the correct equation for the circuit on slide 11?

Submitted by: waydo

Submitted on: October 18, 2004

Identifier:

L4.1

[Back to Top]

-

Please, sir, may I have another Lyapunov function example?

Submitted by: asa

Submitted on: October 18, 2004

Identifier:

L4.1

For more examples, I encourage you to check out Differential Equations and Dynamical Systems by Lawrence Perko, which is currently being used as a textbook by CDS 140. In particular, secion 2.9 starting on page 129 has several good examples.

[Back to Top]

-

How can we tell from the phase plots if the system is oscillating?

Submitted by: haomiao

Submitted on: October 18, 2004

Identifier:

L4.1

If the phase plot takes the form of a spiral or a circle, then the system is oscillating. Take a look at any of the spiral plots and trace one of the lines around an equilibrium point, looking at either x1 or x2. You'll ntoice that coordinate increasing as you spiral around, then decreasing as you come back towards the equilibrium point, increasing again as you move past it, then decreasing again as you come in on your spiral and so on.

[Back to Top]

-

On slide 8, real eigenvalues & complex eigenvalues look the same, both appear to be asymptotically stable. Why?

Submitted by: haomiao

Submitted on: October 18, 2004

Identifier:

L4.1

The stability of a system is determined by the real part of its eigenvalues, so if the real part of the eigenvalues are negative then the system is asy-stable no matter what the complex parts are. The complex part determines the phase response of the system, and contributes to the oscillatory behavior of the system.

[Back to Top]

-

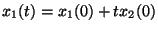

What is an example of a system with Re(\lambda)=0 that is not stable? What if Im(\lambda) is not zero?

Submitted by: asa

Submitted on: October 18, 2004

Identifier:

L4.1

As we discussed in class, this system:

which has two zero eigenvalues, has this solution:

which is clearly not stable.

For the second question, we have to go to a larger system, such as

. .

Here the eigenvalues are

each with multiplicity 2. The solution is quite complicated (it's on p. 36 and 37 of Perko, Differential Equations and Dynamical Systems) but you can see that you're going to have a similar effect as in the first example, and you'll have terms that grow linearly with time.

[Back to Top]

-

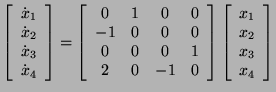

How do I determine how many Jordan blocks a matrix will have for a particular eigenvalue?

Submitted by: asa

Submitted on: October 20, 2004

Identifier:

L4.2

The size of a Jordan block m_i is determined from the characteristic polynomial

where n_i is the algebraic multiplicity of the eigenvalue.

The number of Jordan blocks for a particular eigenvalue s = lambda_i is

For more on Jordan Form, see http://mathworld.wolfram.com/JordanCanonicalForm.html.

[Back to Top]

-

Should there be a C in front of the second term of equation 4.10 in textbook?

Submitted by: jianghao

Submitted on: October 22, 2004

Identifier:

L4.2

yes

[Back to Top]

-

On page 100 of textbook, how do we get equation 4.13, and should the line immediately above this equation read "input is a unit step?"

Submitted by: jianghao

Submitted on: October 22, 2004

Identifier:

L4.2

Yes, the above line is "input is a unit step". You just set u=1 in equation (4.10) and use variable replacement of t' = t-tau to get it

[Back to Top]

-

How do we define the derivative of a step function as used in the next line? (If it were differentiable at t=0, it would also be continuous!?). how do we interpret the delta "function" in equation 4.14?

Submitted by: jianghao

Submitted on: October 22, 2004

Identifier:

L4.2

Because the last term in (4.13) is D*u(t), where u(t) is a step function, the derivative of step function is a delta function (with infinite slope at t=0 and total area/intensity being unity). This differentiation is not

explained by a normal concept, instead distribution theory will account for it. Also delta function can exist not only inside an integral, but also outside.

[Back to Top]

-

Linmod is giving me all sorts of errors, and I'm getting weird things for 2a. What's wrong?

Submitted by: haomiao

Submitted on: October 23, 2004

Identifier:

H4

Before you run linmod, be sure that you have set values for all of the constants. Also, it isn't stated in the problem, but g = 9.8 m/s/s. Then, be sure you're giving linmod values of x and u to perform the linearization around, so your command should be something like:

x0 = [x1, x2, x3, x4], u0 = u;

[A, B, C, D] = linmod('model', x0, u0);

[Back to Top]

-

If the reachability matrix is not full rank, isn't it possible for the system to be able to reach many X's, just not all of them?

Submitted by: jianghao

Submitted on: October 25, 2004

Identifier:

L5.1

Yes, that is absolutely possible. Actually, the rank of the reachability matrix can determine what is the dimension of states that can be reached by some input. Then the next step is that you can even find what the set of reachable states is:)

[Back to Top]

-

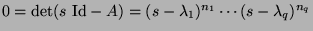

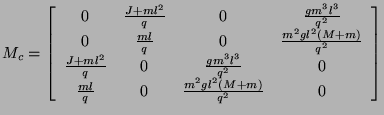

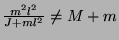

What do you mean by g(M+m) ~= 1 on slide 7? The units don't work out!

Submitted by: asa

Submitted on: October 25, 2004

Identifier:

L5.1

The culprit turns out to be that the reachability matrix on the slides isn't correct. The correct matrix is

. .

Using this matrix, the condition for the system to be reachable is that

. .

Here the units work out.

[Back to Top]

-

How do you choose the eigenvalues when using "place" in MATLAB?

Submitted by: waydo

Submitted on: October 25, 2004

Identifier:

L5.1

Good question! As we will see later in the quarter, the location of the eigenvalues affects things like rise time, overshoot, settling time, etc. When we do control design we often start with specifications on these properties such as "rise time less than 10 seconds" or "overshoot less than 5 percent". These specifications then guide our choice of eigenvalue locations. In principle, if we have a reachable linear system we can place the eigenvalues wherever we like using feedback. In reality our choices will be limited by nonlinear effects like saturation and stiction, which is why we generally design our controllers based on a linearization but then simulate the full nonlinear system for verification.

If you continue on to CDS110b, you will learn alternate methods for controller design that place the eigenvalues to optimize the behavior of the system relative to some performance metric.

[Back to Top]

-

In lecture you said we want to place the eigenvalues of (A+BK), but MATLAB's "place" places eigenvalues of A-BK. What's the deal?

Submitted by: waydo

Submitted on: October 27, 2004

Identifier:

L5.1

, L5.2

Whether our closed loop dynamics matrix is A-BK or A+BK just depends on the sign convention we use when applying the feedback. Sometimes you will see the signal inverted as it is fed back (so u = -Kx), in which case the closed loop dynamics are given by A-BK, and sometimes it will not be inverted (so u = Kx), in which case we use A+BK. The two formulations are exactly equivalent, and the sign convention just determines the sign of the elements of K.

In the book and in MATLAB we use the A-BK sign convention, so we will be more careful to do this in lecture in the future.

[Back to Top]

-

How come you can always find u(t) such that the integrals come out to be what is needed to drive the state where you want it to go if the reachability condition holds?

Submitted by: waydo

Submitted on: October 27, 2004

Identifier:

L5.2

The answer to this question is somewhat involved and beyond the scope of this course. For one take on it, check out Dullerud and Paganini, "A Course in Robust Control Theory: A Convex Approach" (Chapter 2).

If we consider the discrete-time case, however, the intuition is much easier. Let

x(k+1) = A x(k) + B u(k)

We can drive x to zero (or to any arbitrary other value) in n steps or less (where as usual n is the dimension of the system) if the reachability condition

rank( [B AB A^2B ... A^(n-1)B] ) = n

holds, just like in the continuous case. The difference is that it in general takes a finite number of steps to do this, rather than an arbitrarily short period of time as in the continuous case.

To see this, look at x as k increases:

x(k+2) = A x(k+1) + Bu(k+1)

= A^2 x(k) + ABu(k) + Bu(k+1)

...

...

x(k+n) = A^n x(k) + A^(n-1)Bu(k) + ... + ABu(k+n-2) + Bu(k+n-1)

Now all the u's can be chosen independently, so if the columns of

[B AB A^2B ... A^(n-1)B]

are linearly independent (i.e. the rank of this matrix is n) we can pick the u's to "cancel out" any A^n x(k), so we can drive the state to zero.

It isn't too hard to find a counterexample that shows that, depending on A, B, and x, we may not be able to drive the system to zero in less than n steps.

Also, the Cayley-Hamilton theorem tells us that A^n, A^(n+1), etc are linearly dependent on A, A^2, ..., A^(n-1), so we can't do any better (i.e. we can't increase the rank of the reachability matrix if it is deficient) by considering driving the system to zero in more than n steps. Thus, if the system is reachable, we can certainly drive the state anywhere we want it to go in n steps or less, and if it is not, we can pick an initial condition and final condition such that we can't drive the initial condition to the final condition no matter how many steps we get to take.

[Back to Top]

-

What is a "pole"?

Submitted by: asa

Submitted on: October 27, 2004

Identifier:

L5.2

A "pole" is the location of a singularity of a complex function. More generally, we talk about finding the "poles" and "zeros" of a function. If we imagine the function as the ratio of two functions, the poles are the zeros of the denominator, and the zeros are the zeros of the numerator. We will cover poles and zeros in much more depth when we talk about transfer functions in the next chapter.

[Back to Top]

-

Prof. Mabuchi mentioned that if the observability matrix is full rank, we may still have to wait some time to determine a derivative. Is this true?

Submitted by: waydo

Submitted on: October 29, 2004

Identifier:

L5.2

The question continues: If you know the dynamics, and they are linear, surely you can solve for the derivative given only one datum and initial conditions. For example, if you tell me initial conditions and position x, then immediately I can tell you x-dot.

There are a couple of issues here. First is that the observability matrix is telling us if, given measurements, we can back out what the initial condition was. The idea is that we don't know exactly where we are, only what our observations tell us, which may not give us the entire state. For example, we may measure our position but not our rate, so we have to use our measurements of position over time to determine the rate.

The second issue is one of noise. In an ideal world, the rank condition tells us that we can back out the state of the system from measurements not instantaneously, but arbitrarily fast. However, in the real world our measurements are noisy, and if we make our estimator converge very quickly our estimate will amplify the noise present in the measurements. The noisier the system, the longer it takes us to get a good idea of what the state is based on measurements.

In CDS110b we discuss how to strike an optimal balance between converging our estimator quickly and filtering out noisy data, a process known as "Kalman filtering."

[Back to Top]

-

Can we see that Engine Control example again?

Submitted by: asa

Submitted on: November 1, 2004

Identifier:

L6.1

Yes. The TAs will go over that example (or another very similar example) in section this week.

[Back to Top]

-

What on earth is a Laplace domain?

Submitted by: jianghao

Submitted on: November 1, 2004

Identifier:

L6.1

Laplace domain is the complex frequency domain which is denoted by s in your transfer function. Why is Laplace domain important? Because sometimes you will find that it is extremely efficient to analyze your system from zero/pole point of view, which can be extracted from the transfer function. In summary, state space model is a time domain analysis method, while laplace domain is a complex frequency domain analysis method. They are both very useful.

[Back to Top]

-

What was the funny "D with a line through it" symbol on slide 1, and what did the "]" on slide 3 and 4 mean?

Submitted by: waydo

Submitted on: November 1, 2004

Identifier:

L6.1

Oops - it looks like we had some font translation issues. Try looking at the slides later when they get posted in pdf format. All of the symbols above were supposed to be a "phase" symbol:

[Back to Top]

-

For question 2b, what value of Kp should we use?

Submitted by: haomiao

Submitted on: November 7, 2004

Identifier:

H5

It'd be easiest for us to grade if everyone used the same value, so let's just say Kp=500, as on the prior cruise control models. If you (for some reason) decide to use a different value, be sure you note what value you used.

[Back to Top]

-

Why does the book say sin(wt)+icos(wt)?

Submitted by: haomiao

Submitted on: November 8, 2004

Identifier:

L6.1

Section 6.3 of the textbook says e^(iwt) = sin(wt)+icos(wt)when it should say e^(iwt) = cos(wt)+isin(wt).

[Back to Top]

-

What is the definition of bandwidth?

Submitted by: waydo

Submitted on: November 10, 2004

Identifier:

L7.1

, H6

Bandwidth is the frequency at which the *closed-loop* transfer function has a gain of 1/sqrt(2) its DC value. That is, if G(s) is the closed-loop transfer function, the bandwidth is the frequency w at which |G(jw)|/|G(0)| = 1/sqrt(2). You may see it mentioned that the bandwidth is the frequency at which the *open-loop* transfer function has gain 1 (0 dB), but this is only a useful approximation for design purposes. The approximation rougly works because the closed-loop gain is about 1/(1+1) = 1/2 when the open-loop gain is 1, but an error is introduced by the phase of the open-loop transfer function at the crossover frequency. After doing a design, you should always verify the bandwidth of the closed-loop system.

[Back to Top]

-

Where can I find definitions for gain margin, phase margin, and stability margin?

Submitted by: asa

Submitted on: November 14, 2004

Identifier:

L7.1

Look in section 7.4, pages 177 and 178 of the textbook, for these definitions.

[Back to Top]

-

How do I calculate "steady state error"?

Submitted by: asa

Submitted on: November 14, 2004

Identifier:

H6

There is no direct Matlab command to calculate the steady state error. However, if you look at the block diagram for our standard PC feedback loop, you'll see that the error is the input to the control block. You should then be able to calculate a transfer function H_{er} very straightforwardly. Think a bit about "steady state" and you'll see what value of s you need to evaluate this at to get the steady state error.

Also, if you know the final value theorem, and think about the final value of H_{er} for a step input, you should get the same answer. Note, however, that this analysis requires that the system is stable -- if it's not stable, then there is no limit for the final value, and "steady state error" isn't defined.

[Back to Top]

-

For problem 1, do we plot bode and nyquist plots for the open-loop or closed-loop system?

Submitted by: waydo

Submitted on: November 14, 2004

Identifier:

H6

We always generate these plots for the open-loop system. One of the remarkable things about these plots is that the open-loop plots can be used to tell us everything we will need to know about the closed-loop system.

[Back to Top]

-

How does overshoot relate to phase margin?

Submitted by: haomiao

Submitted on: November 21, 2004

Identifier:

H7

You can approximate the system as a 2nd order system by looking at the 2 dominant poles. Note that since poles on the real axis do not contribute to oscillatory behavior, they have no effect on the phase margin. Then, using the equations shown in Wednesday's lecture, you can get a requirement for the damping ratio zeta. Zeta is related to the phase margin through a somewhat complicated formula, which we can approximate to the linear relationship zeta is roughly PM/100.

[Back to Top]

-

Is it OK for a system to have infinite gain margin?

Submitted by: waydo

Submitted on: November 22, 2004

Identifier:

H7

Absolutely. This just means that the phase never crosses -180, and we can turn up the gain as much as we like without causing instability (although in the real world turning up the gain too much will still lead to problems).

[Back to Top]

-

Isn't the sensitivity function the same as the function used for steady state error? How are they related?

Submitted by: asa

Submitted on: November 22, 2004

Identifier:

L9.1

Yes, the sensitivity function is the transfer function from the reference to the error, which we evaluate at s=0 to determine the steady-state error. More generally, this function tells us about tracking error at whatever frequency -- the steady state error is just the tracking error at zero frequency.

[Back to Top]

-

For 2a, what do you mean by "less negative phase"?

Submitted by: asa

Submitted on: November 29, 2004

Identifier:

H8

Recall that 360 degrees of phase is the same as zero. The Bode plot of the new transfer function should have an identical magnitude section, but the phase should be closer to zero (modulo 360 degrees) than the phase of the given transfer function.

[Back to Top]

-

How does settling time relate to the root locus plot?

Submitted by: asa

Submitted on: November 29, 2004

Identifier:

H8

If you look back at slide 7 from lecture 8.2, you'll see that you can learn about the settling time from the dominant poles. You should be able to see those poles on a root locus, and see how they move, which changes the settling time.

[Back to Top]

-

What's the difference between calculating the tracking error with mag(L) vs. the sensitivity function?

Submitted by: asa

Submitted on: November 29, 2004

Identifier:

H7

, H8

For exact calculations of the frequency at which the error is less than a certain bound, you should use H_{er}, the sensitivity function. This gives you a measure of the error directly related to the input. The magnitude of L can be used as a quick approximation -- generally, 1/(1+mag(L)) will be close to mag(1/(1+L)). However, for precise work (i.e. not approximations), you should use H_{er}.

[Back to Top]

-

What do you mean by "If the integral is surjective (as a linear operator), then we can find an input to achieve any desired state"?

Submitted by: asa

Submitted on: December 6, 2004

Identifier:

L5.1

Surjective is what is commonly known as "onto". That is, if we have an operator f: X -> Y, then the image of f is all of Y. In this context, we have a linear operator (the operator, with integral, etc., that takes a system state plus input at time 0 to the system state at time T). This is an operator from the space of (initial conditions (cross) inputs u(tau)) to the space of (final system states). We really want to consider just the input term (the integral), which is an operator from the inputs u(tau) to system states. If this operator is surjective, that means we can reach any final state (accounting for the given initial condition), as long as we have complete control over the input.

[Back to Top]

|